One Mailchimp partner audit, selected from a program of agencies and presented company-wide as the model.

The Situation

Mailchimp runs a partner program that connects agencies with customer accounts identified as needing strategic attention. Agencies enter the program, perform the audits, deliver the findings, and the customer decides whether to act on the recommendations.

The program produces a lot of audits. Most of them look the same. They identify issues. They surface data. They make recommendations. Too often, they get archived.

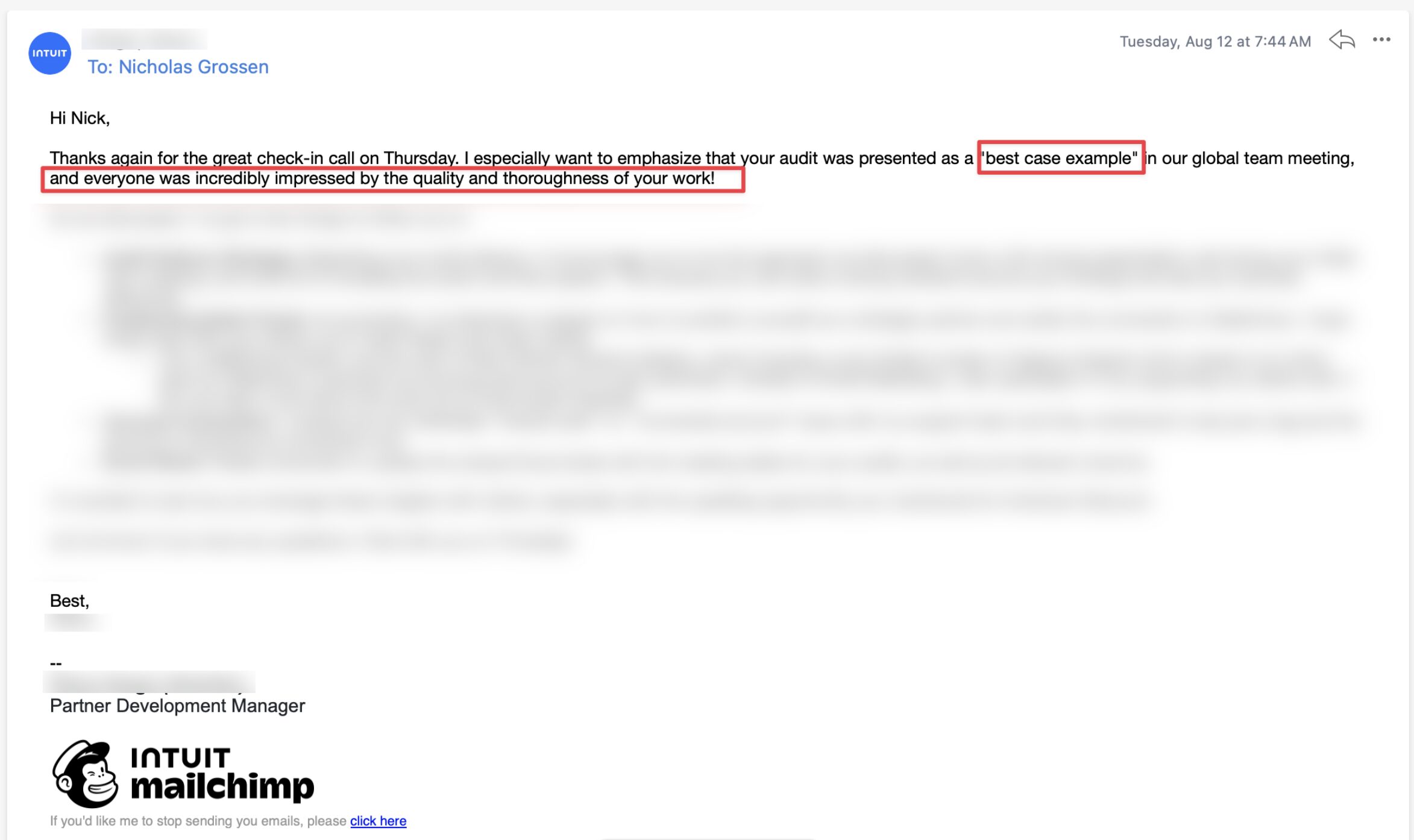

We were one of the agencies in the program. After delivering audits across multiple Mailchimp customer accounts, Intuit selected one of ours and presented it company-wide as the gold standard example for the entire program.

This case study is about what made that audit different.

Key Outcomes

- Selected by Intuit as the best-case example across all participating agencies in the Mailchimp Partner Audit Program

- Presented company-wide in a global Intuit team meeting as the standard format for partner audits

- Generated direct positive feedback from the Mailchimp Partner Development team

- Created a repeatable diagnose-interpret-prescribe audit framework now used across other Tailored Edge engagements

- Led to follow-up advisory and implementation conversations

The Primary Challenge

Most agency audits get written for the agency, not the recipient.

They prove the agency knows the platform. They demonstrate technical depth. They surface every issue the auditor can find. What they fail to do is help the customer make a decision.

The customer ends up with a list of things to fix and no idea which to fix first. The auditor ends up looking thorough but generates no follow-on engagement. Mailchimp ends up with a completed deliverable, but not necessarily a customer who knows what to do next.

The audit had to function differently. It had to be technically rigorous enough to be credible, but structured so a non-technical stakeholder could read it once and walk away knowing exactly what to prioritize. It had to be honest about gaps without reading as criticism. And it had to make the next step obvious without forcing a pitch.

The Goal

Build an audit format that worked as strategy, not as a report card. The deliverable needed to leave the customer with three things: a clear understanding of what was working, a prioritized view of what needed attention, and a defined path forward they could actually execute on.

Our Approach

We treated the audit as a decision-making tool, not a documentation exercise.

That changed how every part of it was built. The structure had to support a reading flow that moved the stakeholder from “here’s what I have” to “here’s what I should do next” without overwhelming them in the middle. Technical findings had to be translated into business implications, because the person reading the audit was usually not the person who would implement it. And the recommendations at the end had to function as a real path forward, not a wishlist.

The audit was also presented live before being shared as a document. That sequencing matters. A stakeholder who has been walked through the audit reads it differently when it circulates afterward. They already know the story it is telling. The document becomes reinforcement, not first contact.

The Framework: Diagnose, Interpret, Prescribe

The signature of the audit was a three-part structure that ran through every slide: the data, an interpretation that contradicted or complicated the surface read, and a specific next step the customer could act on.

Open rates were rising across the account. Surface read: the campaigns are working better. Audit read: the list is shrinking faster than engagement is improving, and the rising rate is a function of disengaged subscribers leaving rather than active subscribers engaging more. Recommendation: shift focus to quality acquisition and re-engagement before celebrating the metric.

Send times were anchored at 8AM, used in 173 campaigns. Surface read: 8AM is the proven slot. Audit read: 8AM was the default, not the winner. 9PM, 3PM, and 4PM all outperformed it on opens and clicks with minimal volume. Recommendation: test the underutilized windows that the data already suggests are stronger.

Subject line patterns showed the same dynamic. Lines leaning on tech specs and repeated phrasing underperformed on opens and drove higher unsubscribes. Lines focused on guest benefits and outcomes performed substantially better. Recommendation: shift to benefit-led subject lines with implied outcomes, with specific replacement copy provided directly in the audit.

The strongest recurring observation underneath these findings was that the account’s defaults had calcified. Sends went out on Tuesdays because they had always gone out on Tuesdays. Sends went out at 8AM because they had always gone out at 8AM. Subject lines leaned on the same product names because that was the established pattern. Each of those defaults was underperforming a tested alternative that already existed in the account’s own data. That is not three findings. It is one finding showing up in three places, which gave the customer a single thesis to work against rather than a scattered list of optimizations.

How the Framework Showed Up in the Deliverable

A Four-Section Flow That Moved From Diagnosis to Decision

Performance and Insights came first to establish the data baseline. Audiences and Automation came next to surface the structural gaps that performance metrics alone could not explain. Design and Branding sat third because it mattered, but mattered less than the layers above it. Recommendations and Ideas came last, where they belonged: after the diagnosis was complete, not bolted onto the side.

This sequencing was deliberate. Front-loading recommendations or scattering them throughout forces the stakeholder to keep reframing their attention. The four-section flow let the reader move forward without backtracking.

Visual Status Indicators That Made Structural Gaps Scannable

The Audiences and Automations sections used a checkmark and X system to map every structural element of the account against what should have been in place. Single list in use: X. Behavior-based segmentation: X. Preference center: X. Welcome sequence: present but underbuilt. Auto-tagging: not present. Re-engagement flow: not present.

A stakeholder could read those two sections in under a minute and walk away with a complete picture of what infrastructure was missing. Surfacing the structural assessment as a status grid let the reader see the shape of the gaps before reading the detail.

Annotated Visual Feedback on the Customer’s Actual Work

The Email Design and Branding section did not describe the customer’s templates in the abstract. It included the actual email, marked up with arrows and annotations pointing at specific friction points. CTA placement was circled. Copy hierarchy was flagged. Specific replacements were named (changing “Request a quotation” to “Get Your Quote,” for example).

Marking up the work directly does two things. It signals operator-level attention, which is hard to fake. And it makes the feedback unmissable in a way that prose descriptions cannot.

A Tiered Implementation Menu That Replaced the Sales Pitch

The audit ended with a tiered implementation menu covering preference center setup, audience consolidation, account cleanup, and core automation builds. Each option included clear scope and pricing, which let the customer understand the path forward without being pushed into a sales conversation. The customer could also choose to take the audit and execute internally, which preserved the partnership integrity.

Why This Worked

This audit changed the customer’s decision-making, which is what separates a strategy document from a report. The four-section flow gave the stakeholder a reading sequence that mirrored how decisions actually get made. The diagnose-interpret-prescribe pattern kept every finding paired with a usable next step. The status indicators made structural gaps immediate. The marked-up creative work made design feedback unmissable. The tiered implementation menu turned the audit from a static deliverable into a doorway.

Throughout, the audit reinforced our role as a strategic partner operating within Mailchimp’s ecosystem rather than an outside critic pointing out flaws. Findings were framed in terms of customer outcomes (deliverability, list health, conversion) rather than platform shortcomings. Recommendations were aligned to Mailchimp’s roadmap and feature set, not generic email marketing best practices. The audit advocated for the customer while staying inside the partner relationship.

When Intuit reviewed the audits coming out of the program, they were looking for the format that demonstrated partner-tier strategic thinking. They selected this one because it solved a problem most partner audits do not even acknowledge: the gap between identifying issues and helping a customer act on them.

Strategic Takeaway

The difference between an audit that lands and an audit that gets archived is not the depth of the analysis. It is the structure of the delivery.

When the format is built around how the recipient will read it, what they need to decide, and what comes next, the audit stops being a report and starts being a strategy document. The customer walks away knowing what to prioritize. The agency earns the next engagement without selling for it. The platform partner gets a customer who is more likely to renew because the audit produced a path forward, not just a list of issues.

That is what made this audit the one Intuit selected: not just the analysis, but the architecture of the delivery.